Jessica James and Kerim Genc, Synopsys02.15.22

Artificial Intelligence (AI)1 provides many potential benefits for orthopedic medical device manufacturers, from the ability to automate large parts of previously manual workflows, to generating fresh insights into patient-specific data. When combined with other technologies like 3D scanning, image processing, simulation, and 3D printing, it is possible to reduce time and improve flexibility at every stage of the device design and manufacturing process. However, challenges remain regarding regulatory controls, accuracy and the skills needed to use these technologies.

On the clinical and academic research side, Synopsys has worked with institutions like the University College London (UCL) and the Royal National Orthopaedics Hospital (RNOH) on applying and demonstrating AI technology to cut down segmentation/landmarking time when going from 3D images to 3D printing surgical guides. Separately, on the commercial side, Synopsys has been working with partners and customers like Corin, nTopology and Carbon to demonstrate how these CT-based workflows can be deployed and scaled commercially. In particular, Corin Group has made a positive move into AI as a key element in their orthopedic workflows within the Optimized Positioning System (OPS) team. They have been working with Synopsys Inc. for many years on improving their workflow for patient-specific surgical planning, design, and simulation.

Considering the various obstacles involved in meeting the demands of scaling for common and more complex orthopedic cases, how are these organizations exploring AI as a solution? In addition, how are these trends more generally being viewed by clinicians and those working in academic research?

Debates, then, are being made within the FDA over how AI can be effectively regulated, particularly when using “adaptive” algorithms that continuously learn, as opposed to those that are “locked” and can be reasonably validated. Given the speed of change associated with AI, options such as adopting a total product lifecycle approach to using new technologies are being explored. Finding a balance between innovation and ensuring that Machine Learning and other AI techniques are risk-assessed before being applied to diagnosis and orthopedic surgical plans is therefore crucial to furthering acceptance.

In this context, the FDA is having to update3 its traditional approach to regulating medical devices to handle the uncertainty and the novelty presented by AI, as well as the impact that this has on making suitable risk assessments. While this thinking is still being worked out, how the technology can be safely applied for patients depends on factors such as the reliability of algorithms, the availability of patient data and the need for longer-term studies of the efficacy of different methods.

Some of the key benefits for AI and orthopedics that were being predicted a few years ago,4 then, are still complicated by these validation demands, but also by the often-steep learning curves associated with adapting new software to hospital labs. Hesitation around getting to grips with technology can make it harder to build trust in adapting proven methods, as well as to justifying budget increases to procure it.

Indeed, one argument is that the technology will, in the long-term, be better suited to indirectly assist clinical decision-making,5 rather than necessarily replace current practices. To this end, AI could reduce the time burden of relatively straightforward tasks at early stages of pre-surgical planning workflows involving device design and patient-specific data, allowing doctors and surgeons to focus on diagnostic decision-making and patient care. Virtual reality and augmented reality6 can also help with surgical training, and make it easier to carry out procedures through 3D visualization. Similarly, the use of robotics is often tied to manual control by a surgeon to aid in precision, improving the potential for successful outcomes for patients.

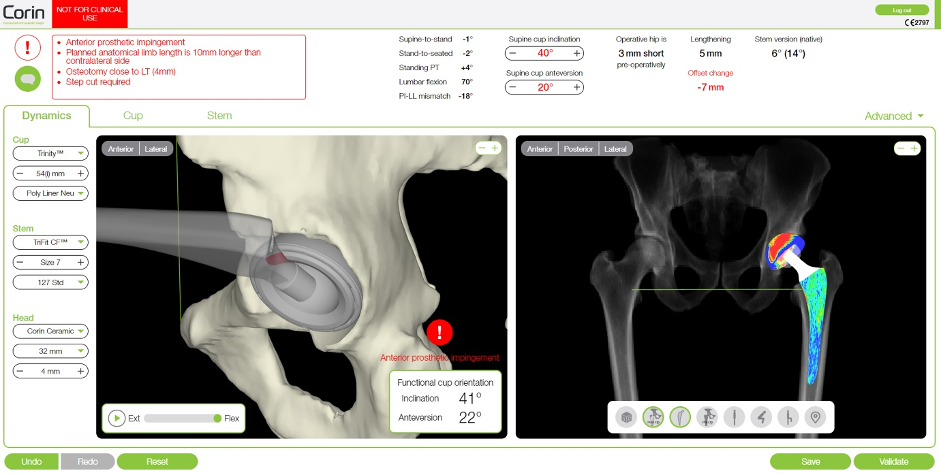

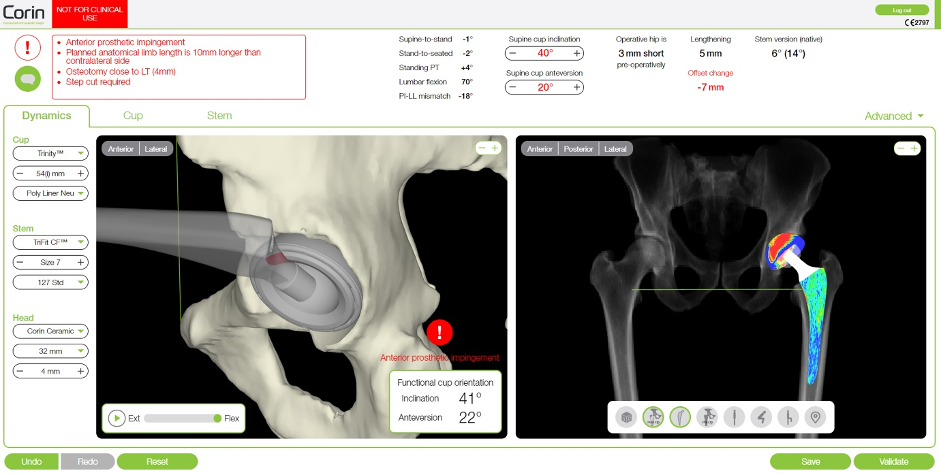

The established Corin workflow involves taking 3D images of patient anatomies from computed tomography (CT) and X-ray computed tomography (X-Ray CT) scans, before importing to Simpleware software to segment regions of interest and add anatomical landmarks. Scripting was used to speed up these steps, with the resulting models simulated within the OPS system to explore movement and orientation. When combined with Computer-aided Design (CAD) implants that are fitted to patient data, 3D printed guides could be generated as a clinical resource to plan procedures, including through a laser-guided alignment system.

While this process proved to be efficient and repeatable for different cases, the time spent on segmentation and landmarking remained a bottleneck for Corin in creating a patient-specific model for analysis. To this end, Synopsys worked with Corin engineers to apply new Machine Learning-based AI technologies to image data, with the goal of completely automating the steps of going from the patient image data to a segmented model, making it easier to get to the more valuable simulation stage. This almost complete elimination of segmentation time would enable the OPS group to both scale up without the additional head count and to capture the expertise within the AI algorithm to minimize the effects of employee turnover.

To achieve this improvement, Synopsys particularly incorporated Simpleware Custom Modeler, a Machine Learning-based AI tool trained using patient-specific anatomical data. Custom Modeler is a customized solution that involves users and Synopsys engineers working together to create an automated workflow, principally for segmenting and landmarking data, but also for obtaining measurements, statistics, reports, meshes and other outputs.

Figure 1: Implant planning in the Corin OPS system (Image courtesy of Corin)

When added to Corin’s established workflow, Custom Modeler effectively automates segmentation and landmarking much faster than both manual tasks and previously used scripts, typically requiring very limited to no corrections to exported models. Imported CT data is run through Custom Modeler filters via a single click of a button, with additional options for batch processing project files. The total time spent per case was reduced by an average of 94%.

In this context, we can view automation as beginning from a scenario whereby manual off-the-shelf segmentation workflows require 100% of engineering time per case. For Synopsys and Corin, the development of non-AI scripting plugins reduced this time by 65% per case, significantly easing the burden of engineers processing patient data. However, once the first iteration of the Simpleware Custom Modeler plugin was introduced, that time went down by 80% per case from the original workflow, with only two-thirds representing computer time with no human interaction. The remaining third of the workflow is then spent on manual tidying of the segmented model. Finally, the next version of Custom Modeler, enhanced with additional ground truth training data, managed to cut engineering time by 94% per case, again with two-thirds being the computer time and a third for tidy-up.

"The Simpleware technology is integral to our production system. We have always had a very good relationship with Synopsys and regularly are able to reach out for their support,” commented Alicia Miller, Production Engineering Manager at Corin. “The introduction of Simpleware’s Custom Modeler has not only reduced segmentation time but also enabled faster training of the production process to new staff."

Synopsys recently collaborated with software company nTopology and 3D printing specialist Carbon on a process for designing and fabricating a patient-specific tibial surgical cutting guide. This approach combines 3D imaging and processing with design automation and 3D printing, as well as AI technologies, to create a fast and scalable workflow. Among this project’s key goals was to speed up manual segmentation and streamline the common steps needed to go from a patient scan through to a usable print, thus saving time and costs for medical device manufacturers, as well as reducing manual intervention for surgical planning.

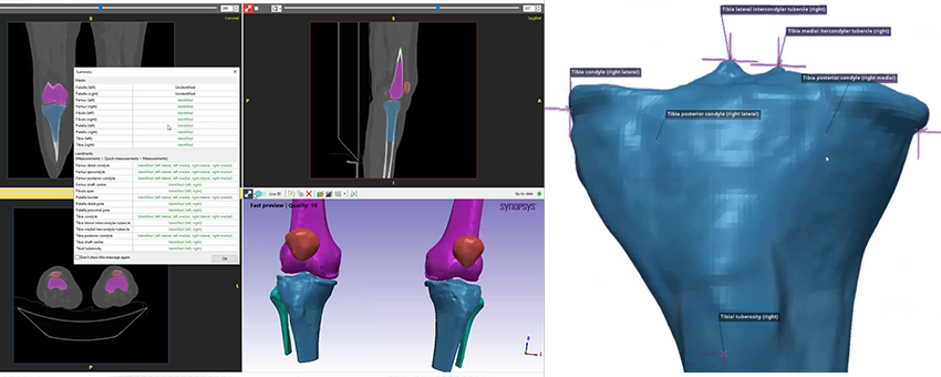

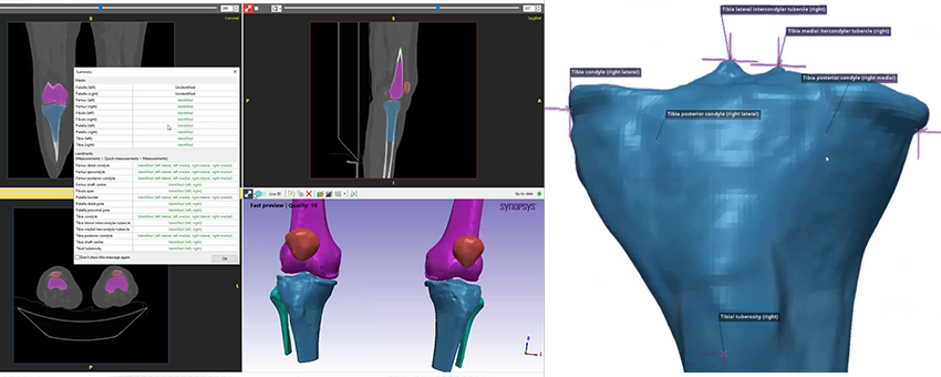

The first step to creating a 3D printed cutting guide involves acquiring patient-specific image data through MRI and/or CT scans. In this case, Synopsys used the Simpleware AS Ortho tool for automatic segmentation of knee CT scans, with the method representing a more off-the-shelf option than the customized approach taken with Corin. DICOM CT data of the knee was imported and tags checked to ensure anonymization of patient information, before visualizing the anatomy as 2D slices and creating a 3D model using background volume rendering.

AS Ortho was then employed to automatically segment common regions of interest in the knee, creating a summary report detailing the obtained parts of the bone and anatomical landmarks. The segmented bones were converted into surface objects suitable for 3D printing through a “mask to surface” tool and an ID number was added using embossed text. The resulting knee model was exported as 3MF files to nTopology for design and optimization of the cutting guide.

Figure 2: Simpleware software was used to automatically segment the knee and add landmarks (Image courtesy of Synopsys)

Bone geometries and anatomical landmarks from Simpleware software were used in nTopology to design a patient-specific tibial cutting guide, utilizing implicit modeling technologies to generate unbreakable geometries. For this workflow, nTopology engineers generated a conformal geometry for the cutting guide from the patient-specific anatomy, factoring in structural support columns for manufacturing. Cutting guide features were added based on the anatomical landmarks, such as an insertion panel and cutting plane, before meshing the model and exporting it as a 3MF file to the Carbon DLS process.

According to Christopher Cho, Staff Application Engineer at nTopology, their software’s “ability to accommodate complex and organic shapes such as patient-specific bone scans

means that the software also excels at creating conformal geometry that can be

accurately tailored towards a more optimal patient outcome.”

For this final stage of this process, the physical tibial cutting guide for the patient was manufactured by Carbon, which involved uploading and orienting the file for their M2 printer. A decision was made to use MPU 100 material due to its biocompatibility and sterilization capabilities. Metadata from the Simpleware and nTopology files can also be used to trace the guide from the original anatomical data. Carbon added supports for the print process, before positioning and duplicating the part in their software platform. The print-time was two hours and twenty-five minutes, with the model washed and the supports removed, before baking to obtain the final product. At this point, the cutting guide is ready for sterilization, packaging and dispatch to a clinician for inspection.

Taken together, the workflow demonstrated flexibility for different cases and design requirements, as well as opportunities to add more data to the AI algorithm used for the Simpleware software knee segmentation. One of the key benefits of this approach is that it can be scaled to larger and more potentially complex situations. In the future, then, the process could be applied to clinical practice through a dedicated 3D printing hub within a hospital. In-house centers of this type are becoming recognized11 as an efficient alternative to sending data to an external company.

Implementing hubs within hospitals means that surgeons can be involved in multiple stages of implant planning, resulting in shorter design and manufacturing times. In particular, fast and almost wholly automated AI-based segmentation means that the technical obstacles to creating patient-specific models can be mitigated, requiring less manual intervention and quicker integration within established point-of-care (POC) tasks.

Synopsys’ Kerim Genc has worked on these projects for adding more 3D planning and AI to existing applications, and has praised the results to date and prospects for the future:

“Nothing gets me more excited than speaking to a device company’s patient-specific group that is in the process of scaling up but struggling due to scaling issues. We get to apply our AI enabled solutions to help them reduce/eliminate bottlenecks in their manual segmentation and measurement workflow, eliminate the need to hire more technicians to cope with the case load, and help them capture the segmentation expertise in the algorithms and not be limited by employee turnover and training. It is a very exciting time.”

While there are still challenges involving reducing the effect of metal artefacts, and making technologies more accessible and cost-effective for hospitals, 3D CT-planning mitigates against the risk of complications and potentially increases the lifetime of devices. Johann Henckel, Researcher and Orthopedic surgeon at RNOH, has commented here on how, ideally, “every patient should have CT-based-planned hip replacement. Of course (CT-based planning for) younger patients is a must, complex anatomy a must, but I think there will be a move towards it being standard practice.”

Prof. Allister Hart, Orthopedic Surgeon, UCL and RNOH, has noted how the value of these techniques means “the case is very easy to make for really complicated surgery” extending them to every primary operation is another question. However, Professor Hart has noted how “I think much wider adoption might come, but I would predict that 50% of hips within the next 10 years, could be CT-planned.”

However, questions over AI and other advances in imaging, patient-specific data and simulation do remain, and it is important to remember that any changes are not currently replacing traditional methods. The greatest value, then, for applying AI, 3D printing and virtual surgical planning, will arguably be in how it simplifies time-intensive workflows, captures the knowledge and experience needed to perform segmentation effectively, enables technicians, engineers and clinicians to focus on higher value tasks, and increases the range of diagnostic options available to surgeons.

Disclaimer: Prof. Allister Hart and Johann Henckel do not endorse or have any commercial relationships with Corin Group, Carbon or nTopology.

References:

1. https://www.odtmag.com/issues/2021-05-01/view_columns/orthopedics-is-slowly-wising-up-to-ai-tech/?widget=listSection

2. https://www.analyticsinsight.net/ai-in-medical-devices-these-are-the-emerging-industry-application/.

3. https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-and-machine-learning-software-medical-device.

4. https://www.odtmag.com/issues/2018-08-01/view_columns/the-promising-future-for-ai-in-orthopedics/.

5. https://online.boneandjoint.org.uk/doi/full/10.1302/2058-5241.5.190092.

6. https://medicalfuturist.com/the-technological-future-of-surgery/.

7. https://www.odtmag.com/contents/view_online-exclusives/2017-08-08/patient-specific-simulation-breakthroughs-for-total-hip-replacements/

8. https://www.coringroup.com/about-us/our-news/ops-reaches-20000-cases-performed-worldwide.

9. https://www.odtmag.com/contents/view_online-exclusives/2017-03-08/personalizing-spinal-fusion/

10. https://josr-online.biomedcentral.com/articles/10.1186/s13018-021-02310-y.

11. https://healthcare-in-europe.com/en/news/reforming-surgical-procedures-with-3d-printing-hubs-in-hospitals.html

Jessica James, Ph.D., is a business process analyst at the Simpleware Product Group at Synopsys. She is based in the UK.

Kerim Genc is a business development manager for Synopsys.

On the clinical and academic research side, Synopsys has worked with institutions like the University College London (UCL) and the Royal National Orthopaedics Hospital (RNOH) on applying and demonstrating AI technology to cut down segmentation/landmarking time when going from 3D images to 3D printing surgical guides. Separately, on the commercial side, Synopsys has been working with partners and customers like Corin, nTopology and Carbon to demonstrate how these CT-based workflows can be deployed and scaled commercially. In particular, Corin Group has made a positive move into AI as a key element in their orthopedic workflows within the Optimized Positioning System (OPS) team. They have been working with Synopsys Inc. for many years on improving their workflow for patient-specific surgical planning, design, and simulation.

Considering the various obstacles involved in meeting the demands of scaling for common and more complex orthopedic cases, how are these organizations exploring AI as a solution? In addition, how are these trends more generally being viewed by clinicians and those working in academic research?

AI Trends in Orthopedics

The use of AI in the medical device industry has been accelerated over the past year, to some extent, by the impact of the COVID-19 pandemic, as manufacturers look for alternative, more efficient ways to plan surgeries and monitor patients. Key trends have included incorporating AI into medical imaging devices, as well as into wearable technologies.2 By contrast, AI algorithms for surgical planning and orthopedic design face the additional complications of being potentially applied to patient diagnosis and procedures, thus raising the regulatory bar for acceptance.Debates, then, are being made within the FDA over how AI can be effectively regulated, particularly when using “adaptive” algorithms that continuously learn, as opposed to those that are “locked” and can be reasonably validated. Given the speed of change associated with AI, options such as adopting a total product lifecycle approach to using new technologies are being explored. Finding a balance between innovation and ensuring that Machine Learning and other AI techniques are risk-assessed before being applied to diagnosis and orthopedic surgical plans is therefore crucial to furthering acceptance.

In this context, the FDA is having to update3 its traditional approach to regulating medical devices to handle the uncertainty and the novelty presented by AI, as well as the impact that this has on making suitable risk assessments. While this thinking is still being worked out, how the technology can be safely applied for patients depends on factors such as the reliability of algorithms, the availability of patient data and the need for longer-term studies of the efficacy of different methods.

Some of the key benefits for AI and orthopedics that were being predicted a few years ago,4 then, are still complicated by these validation demands, but also by the often-steep learning curves associated with adapting new software to hospital labs. Hesitation around getting to grips with technology can make it harder to build trust in adapting proven methods, as well as to justifying budget increases to procure it.

Indeed, one argument is that the technology will, in the long-term, be better suited to indirectly assist clinical decision-making,5 rather than necessarily replace current practices. To this end, AI could reduce the time burden of relatively straightforward tasks at early stages of pre-surgical planning workflows involving device design and patient-specific data, allowing doctors and surgeons to focus on diagnostic decision-making and patient care. Virtual reality and augmented reality6 can also help with surgical training, and make it easier to carry out procedures through 3D visualization. Similarly, the use of robotics is often tied to manual control by a surgeon to aid in precision, improving the potential for successful outcomes for patients.

Enhancing Proven Workflows with AI

Corin Group is a company that has benefited from strengthening its overall systems with AI, particularly in the case of their FDA 510(k)-cleared Optimized Positioning System (OPS) technology for virtual surgical planning. Several years ago, Corin and Synopsys already had a process in place7 for combining 3D image data, model generation, simulation and 3D printing, ahead of robot and laser-assisted surgical guides. This approach has had particular success for optimal orientation of the acetabular component in total hip arthroplasties. OPS was launched in the United States in Nov. 2016, and has since been used for procedures involving over 20,000 patients worldwide, while being available in 13 countries and in use by almost 400 surgeons internationally.8 What, then, has changed in subsequent years, and how has AI played an important role in making OPS even more efficient?The established Corin workflow involves taking 3D images of patient anatomies from computed tomography (CT) and X-ray computed tomography (X-Ray CT) scans, before importing to Simpleware software to segment regions of interest and add anatomical landmarks. Scripting was used to speed up these steps, with the resulting models simulated within the OPS system to explore movement and orientation. When combined with Computer-aided Design (CAD) implants that are fitted to patient data, 3D printed guides could be generated as a clinical resource to plan procedures, including through a laser-guided alignment system.

While this process proved to be efficient and repeatable for different cases, the time spent on segmentation and landmarking remained a bottleneck for Corin in creating a patient-specific model for analysis. To this end, Synopsys worked with Corin engineers to apply new Machine Learning-based AI technologies to image data, with the goal of completely automating the steps of going from the patient image data to a segmented model, making it easier to get to the more valuable simulation stage. This almost complete elimination of segmentation time would enable the OPS group to both scale up without the additional head count and to capture the expertise within the AI algorithm to minimize the effects of employee turnover.

To achieve this improvement, Synopsys particularly incorporated Simpleware Custom Modeler, a Machine Learning-based AI tool trained using patient-specific anatomical data. Custom Modeler is a customized solution that involves users and Synopsys engineers working together to create an automated workflow, principally for segmenting and landmarking data, but also for obtaining measurements, statistics, reports, meshes and other outputs.

Figure 1: Implant planning in the Corin OPS system (Image courtesy of Corin)

When added to Corin’s established workflow, Custom Modeler effectively automates segmentation and landmarking much faster than both manual tasks and previously used scripts, typically requiring very limited to no corrections to exported models. Imported CT data is run through Custom Modeler filters via a single click of a button, with additional options for batch processing project files. The total time spent per case was reduced by an average of 94%.

In this context, we can view automation as beginning from a scenario whereby manual off-the-shelf segmentation workflows require 100% of engineering time per case. For Synopsys and Corin, the development of non-AI scripting plugins reduced this time by 65% per case, significantly easing the burden of engineers processing patient data. However, once the first iteration of the Simpleware Custom Modeler plugin was introduced, that time went down by 80% per case from the original workflow, with only two-thirds representing computer time with no human interaction. The remaining third of the workflow is then spent on manual tidying of the segmented model. Finally, the next version of Custom Modeler, enhanced with additional ground truth training data, managed to cut engineering time by 94% per case, again with two-thirds being the computer time and a third for tidy-up.

"The Simpleware technology is integral to our production system. We have always had a very good relationship with Synopsys and regularly are able to reach out for their support,” commented Alicia Miller, Production Engineering Manager at Corin. “The introduction of Simpleware’s Custom Modeler has not only reduced segmentation time but also enabled faster training of the production process to new staff."

Rapid Fabrication of Patient-Specific Cutting Guides

As well as work on developing pre-surgical plans, progress is being made in the area of creating customizable medical devices. The use of customized implants and guides derived from patient-specific scan data has grown in prominence over the last few years, including for specific procedures such as spinal fusion.9 In addition, 3D printing patient-specific surgical guides are being deployed by the likes of Johnson & Johnson, and also provide several key advantages in areas such as total knee arthroplasties.10Synopsys recently collaborated with software company nTopology and 3D printing specialist Carbon on a process for designing and fabricating a patient-specific tibial surgical cutting guide. This approach combines 3D imaging and processing with design automation and 3D printing, as well as AI technologies, to create a fast and scalable workflow. Among this project’s key goals was to speed up manual segmentation and streamline the common steps needed to go from a patient scan through to a usable print, thus saving time and costs for medical device manufacturers, as well as reducing manual intervention for surgical planning.

The first step to creating a 3D printed cutting guide involves acquiring patient-specific image data through MRI and/or CT scans. In this case, Synopsys used the Simpleware AS Ortho tool for automatic segmentation of knee CT scans, with the method representing a more off-the-shelf option than the customized approach taken with Corin. DICOM CT data of the knee was imported and tags checked to ensure anonymization of patient information, before visualizing the anatomy as 2D slices and creating a 3D model using background volume rendering.

AS Ortho was then employed to automatically segment common regions of interest in the knee, creating a summary report detailing the obtained parts of the bone and anatomical landmarks. The segmented bones were converted into surface objects suitable for 3D printing through a “mask to surface” tool and an ID number was added using embossed text. The resulting knee model was exported as 3MF files to nTopology for design and optimization of the cutting guide.

Figure 2: Simpleware software was used to automatically segment the knee and add landmarks (Image courtesy of Synopsys)

Bone geometries and anatomical landmarks from Simpleware software were used in nTopology to design a patient-specific tibial cutting guide, utilizing implicit modeling technologies to generate unbreakable geometries. For this workflow, nTopology engineers generated a conformal geometry for the cutting guide from the patient-specific anatomy, factoring in structural support columns for manufacturing. Cutting guide features were added based on the anatomical landmarks, such as an insertion panel and cutting plane, before meshing the model and exporting it as a 3MF file to the Carbon DLS process.

According to Christopher Cho, Staff Application Engineer at nTopology, their software’s “ability to accommodate complex and organic shapes such as patient-specific bone scans

means that the software also excels at creating conformal geometry that can be

accurately tailored towards a more optimal patient outcome.”

For this final stage of this process, the physical tibial cutting guide for the patient was manufactured by Carbon, which involved uploading and orienting the file for their M2 printer. A decision was made to use MPU 100 material due to its biocompatibility and sterilization capabilities. Metadata from the Simpleware and nTopology files can also be used to trace the guide from the original anatomical data. Carbon added supports for the print process, before positioning and duplicating the part in their software platform. The print-time was two hours and twenty-five minutes, with the model washed and the supports removed, before baking to obtain the final product. At this point, the cutting guide is ready for sterilization, packaging and dispatch to a clinician for inspection.

Taken together, the workflow demonstrated flexibility for different cases and design requirements, as well as opportunities to add more data to the AI algorithm used for the Simpleware software knee segmentation. One of the key benefits of this approach is that it can be scaled to larger and more potentially complex situations. In the future, then, the process could be applied to clinical practice through a dedicated 3D printing hub within a hospital. In-house centers of this type are becoming recognized11 as an efficient alternative to sending data to an external company.

Implementing hubs within hospitals means that surgeons can be involved in multiple stages of implant planning, resulting in shorter design and manufacturing times. In particular, fast and almost wholly automated AI-based segmentation means that the technical obstacles to creating patient-specific models can be mitigated, requiring less manual intervention and quicker integration within established point-of-care (POC) tasks.

Synopsys’ Kerim Genc has worked on these projects for adding more 3D planning and AI to existing applications, and has praised the results to date and prospects for the future:

“Nothing gets me more excited than speaking to a device company’s patient-specific group that is in the process of scaling up but struggling due to scaling issues. We get to apply our AI enabled solutions to help them reduce/eliminate bottlenecks in their manual segmentation and measurement workflow, eliminate the need to hire more technicians to cope with the case load, and help them capture the segmentation expertise in the algorithms and not be limited by employee turnover and training. It is a very exciting time.”

The Surgeon’s View

Outside of these commercial projects, those working directly in patient-specific orthopedic surgical planning also are engaging with how a range of image-based techniques can help with patient outcomes, particularly in the case of challenging revision surgeries. For example, at University College London (UCL) and the Royal National Orthopedic Hospital (RNOH), researchers and surgeons are working together to use CT-planning, AI with Simpleware software and 3D printing to plan complex surgeries. In this context, pre-and-post-operative scanning for revision cases is particularly valuable for getting a better understanding of a patient’s needs before hip replacements.While there are still challenges involving reducing the effect of metal artefacts, and making technologies more accessible and cost-effective for hospitals, 3D CT-planning mitigates against the risk of complications and potentially increases the lifetime of devices. Johann Henckel, Researcher and Orthopedic surgeon at RNOH, has commented here on how, ideally, “every patient should have CT-based-planned hip replacement. Of course (CT-based planning for) younger patients is a must, complex anatomy a must, but I think there will be a move towards it being standard practice.”

Prof. Allister Hart, Orthopedic Surgeon, UCL and RNOH, has noted how the value of these techniques means “the case is very easy to make for really complicated surgery” extending them to every primary operation is another question. However, Professor Hart has noted how “I think much wider adoption might come, but I would predict that 50% of hips within the next 10 years, could be CT-planned.”

The Future for AI in Patient-Specific Healthcare?

As these studies have shown, AI has potential to enhance existing workflows, as well as to support more general improvements in patient-specific surgical planning and orthopedics. Medical device manufacturers and clinicians can benefit from improved design knowledge, a reduced reliance on costly physical testing and the ability to integrate different technologies into single processes.However, questions over AI and other advances in imaging, patient-specific data and simulation do remain, and it is important to remember that any changes are not currently replacing traditional methods. The greatest value, then, for applying AI, 3D printing and virtual surgical planning, will arguably be in how it simplifies time-intensive workflows, captures the knowledge and experience needed to perform segmentation effectively, enables technicians, engineers and clinicians to focus on higher value tasks, and increases the range of diagnostic options available to surgeons.

Disclaimer: Prof. Allister Hart and Johann Henckel do not endorse or have any commercial relationships with Corin Group, Carbon or nTopology.

References:

1. https://www.odtmag.com/issues/2021-05-01/view_columns/orthopedics-is-slowly-wising-up-to-ai-tech/?widget=listSection

2. https://www.analyticsinsight.net/ai-in-medical-devices-these-are-the-emerging-industry-application/.

3. https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-and-machine-learning-software-medical-device.

4. https://www.odtmag.com/issues/2018-08-01/view_columns/the-promising-future-for-ai-in-orthopedics/.

5. https://online.boneandjoint.org.uk/doi/full/10.1302/2058-5241.5.190092.

6. https://medicalfuturist.com/the-technological-future-of-surgery/.

7. https://www.odtmag.com/contents/view_online-exclusives/2017-08-08/patient-specific-simulation-breakthroughs-for-total-hip-replacements/

8. https://www.coringroup.com/about-us/our-news/ops-reaches-20000-cases-performed-worldwide.

9. https://www.odtmag.com/contents/view_online-exclusives/2017-03-08/personalizing-spinal-fusion/

10. https://josr-online.biomedcentral.com/articles/10.1186/s13018-021-02310-y.

11. https://healthcare-in-europe.com/en/news/reforming-surgical-procedures-with-3d-printing-hubs-in-hospitals.html

Jessica James, Ph.D., is a business process analyst at the Simpleware Product Group at Synopsys. She is based in the UK.

Kerim Genc is a business development manager for Synopsys.