Linda Braddon, Ph.D., President and CEO, Secure BioMed Evaluations02.12.18

Successful regulatory submissions are critical for the commercialization of an orthopedic device. U.S. Food and Drug Administration (FDA) clearance on a product is not the only factor in commercial success, but it is certainly one of the key components. Showing a device is substantially equivalent to a legally marketed product and reassessing the device after clearance due to a design change is critical. The focus of this article is to highlight an effective process that will ensure a substantial equivalence ruling and future evaluations of design changes to determine whether a new submission must be filed.

Proving Substantial Equivalence for Clearance

One of the most difficult components of a regulatory submission for product clearance is proving substantial equivalence to predicate and reference devices. During the design process, there is typically a design input/output file that details various device characteristics. An important section of this document, in orthopedics, is the mechanical performance criteria. Acceptable performance criteria can be determined in many ways, including published data, justifications of acceptance criteria based on physiologic loading, and side-by-side comparative figures.

My company helps many small firms gain orthopedic product clearance. We spend lots of time educating clients on the use of predicates and reference devices in a 510(k) submission. A primary predicate sets the tone for indications for use. The closer the indications for use statement is to the primary predicate, the more likely it is to be accepted by FDA. Other predicates (sometimes called reference devices) can be used for a technological comparison, including material choices and mechanical performance.

In orthopedics, the mechanical performance section of a 510(k) submission is critical. Medtech newcomers are often surprised at the difficulty of pulling data from competitive devices to compare strength. I am frequently asked by new clients to pull competitive 510(k) data to make comparisons easy but that information is not typically accessible. While the Freedom of Information Act can be leveraged to access 510(k) data, this process can be time consuming and unreliable in securing the necessary information. Here’s why: Before FDA sends the submission to the requestor, it is sent to the sponsor for redaction, and the sponsor will typically protect all sensitive and interesting material. Occasionally, useful data can be found in a redacted document, but this usually is not the norm.

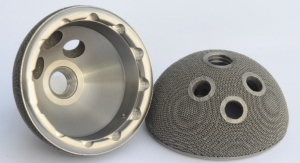

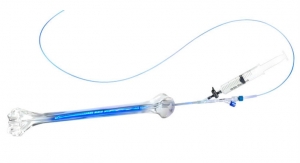

The best way to get comparative testing data for correlation purposes is side-by-side testing. Such a suggestion usually strikes fear in the hearts of even the bravest entrepreneurs, as this type of analysis essentially doubles the cost of testing by testing. Cost, however, is not as burdensome as the actual acquisition of competitive parts. Companies cannot realistically call their competitors and place orders. Acquiring competitive devices involves finances and ethics; even upon acquisition, there will unlikely be enough components to perform a full battery of comparative testing. In these instances, testing matrix reviews and strategic comparative testing is critical. Case in point: For some devices that typically undergo both static and dynamic testing, there may only be enough competitive devices to characterize static strength. When performing side-by-side testing it is crucial to analyze the worst-case configuration to determine whether the competitive device is likely to be an equivalent or inferior performer. Take orthopedic screws, for example. An implant with 6 mm-diameter screws must be “worst-case” tested against a competitive and legally marketed device with 5 mm-diameter screws. Proving superiority against the smaller-sized screw will give the FDA confidence the larger diameter screw is sufficient. One area in which side-by-side testing is practically unavoidable is wear testing. The very nature of wear testing is variable depending on the machine used, set-up, testing fluid, and other factors. Although it is possible to compare wear debris generation to literature values, there must be a strong scientific justification that the testing setup (and, therefore, results) are comparable.

A much easier way to show substantial equivalence in performance testing is through simple reviews of public information. Peer-reviewed journal articles are an obvious choice, but white papers can be a valuable source as well. White papers published by large companies usually include product testing data (screw or plate strength, for example), though precise testing methodology is typically not revealed. Nevertheless, the testing data embedded in these white papers can easily be translated into a comparison table to show testing equivalence. For well-characterized products like pedicle screw systems, interbody fusion devices, and hip stems, a large body of very useful published data exists. The FDA provides a wealth of publicly available data too, through guidance documents. These files typically outline the type of data the agency expects and can also set acceptance criteria.

Some groups at FDA are more proactive at this than others: The Orthopedic Rapid Comparative Analysis (ORCA) group reviews data from various spinal devices with market approval. This group has published a peer-reviewed journal article in the Journal of Biomechanics that summarizes Static axial compression, shear and torsion, dynamic axial compression, and subsidence. ORCA also is currently evaluating thoracolumbar pedicle screw data. I’m hopeful other FDA groups will consider publishing data on well-characterized devices in the future.

Published articles also can help determine physiological loading requirements—another tool for justifying substantial equivalence. In the case of a custom designed mechanical testing plan for a novel interbody fusion device, for example, substantial equivalence could be proven via comparison to surrounding bone. Logically, if the vertebral body breaks at a certain loading level, the interbody fusion device must be equivalently as strong as surrounding bone (with perhaps an increase to account for safety). Every device is different, but I have been very successful justifying an acceptance criterion based on the argument that surrounding bone would break before the subject device. Physiological parameters are also a very reasonable basis for the number of cycles needed in dynamic testing. The FDA’s guidance document on spinal devices indicates the 5 million cycles used by most companies for dynamic testing is not random, but rather based on expected loading cycles with a built-in safety factor before fusion.

Design Changes After Clearance

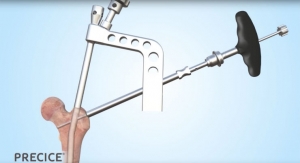

The challenges associated with justifying device acceptance criteria do not necessarily end with proof of substantial equivalence. Once a product is on the market, a company will solicit feedback on the device through many avenues. This data may lead to changes and/or improvements to the device. Modifications that change the risk profile of a product require a new 510(k) submission, even if the change is necessary to mitigate a known risk. The FDA’s new guidance, “Deciding When to Submit a 510(k) for a Change to an Existing Device,” clarifies the agency’s position on submitting new 510(k) applications for product adjustments—even those considered to be safety improvements. During my tenure in this industry, I’ve seen countless product design changes (addressing specific problems) have unintended negative consequences that only surfaced during clinical use. The guidance document also addresses the consequences of not filing a new 510(k) application, noting the choice should be confirmed by successful validation and verification activities. If, for example, a change is made to a pedicle screw tulip head, testing must show the new design is equivalent to the original; parity to initial acceptance criteria is insufficient, as the product must perform the same as the original cleared device. In the past, medtech firms tended to use a letter rather than a formal 510(k) application to fix a deficiency found through postmarket surveillance data, and that approach generally was accepted because the improved device was better than its older version. However, this is no longer an acceptable practice and is in direct violation of FDA’s expectation.

The FDA also clarifies its position on labeling changes in its new guidance document. The decision-making flow chart makes it very clear that changes in indications, warnings, precautions, or changes in directions for use require a new 510(k) submission. Directions for use should be fine-tuned for purposes of readability or clarity as long as those changes do not alter the indications for use, though they should nevertheless be justified and documented. Changed indications for use require a new 510(k) submission.

Conclusions

Setting defendable acceptance criteria for both new and modified devices is a critical job in a medical device company. The difference between adequate and poor product performance is either a failed 510(k) submission for new technology or a recall for a cleared but modified device. There are many things a company can do to keep abreast of mechanical performance requirements. One of the best ways to stay well-informed of the latest mechanical testing requirements in orthopedics is to become an active member of ASTM or ISO. Most testing for orthopedic devices is driven by an ASTM or an ISO standard. The directors of these groups are very knowledgeable on testing requirements, publicly available testing data, and the newest trends in regulatory requirements. Additionally, FDA typically has representatives on these committees and is very receptive to generic questions. These standard committees are volunteer groups, but the time commitment is well worth the reward of regulatory insight into the latest testing trends.

One final piece of advice: Companies with strong mechanical justifications for performance testing that are undecided about filing a new 510(k) for a design modification should probably submit one. The decision matrices in the FDA guidance document are clear; any uncertainty over filing is indicative of a difficulty justifying the decision against a 510(k). The difference between a letter to file and a 510(k) clearance on a commercially distributed device is that a clearance letter now aligns with FDA expectations. The old way—a letter to file approach—could result in a recall.

Linda Braddon, Ph.D., is president and CEO of Secure BioMed Evaluations. She works with emerging and established companies to prove regulatory, quality, and technical support to both the medical device and biologics industries. Dr. Braddon has a B.S. degree in engineering from Mercer University along with a master of science degree and a Ph.D. in mechanical engineering with a specialization in bioengineering. Her 20 years of experience includes extensive work in orthopedics, dental implants, ophthalmology, respiratory, urology, hydrogels, dura mater substitutes, wound coverings, orthotic devices, and antimicrobial agents.

Proving Substantial Equivalence for Clearance

One of the most difficult components of a regulatory submission for product clearance is proving substantial equivalence to predicate and reference devices. During the design process, there is typically a design input/output file that details various device characteristics. An important section of this document, in orthopedics, is the mechanical performance criteria. Acceptable performance criteria can be determined in many ways, including published data, justifications of acceptance criteria based on physiologic loading, and side-by-side comparative figures.

My company helps many small firms gain orthopedic product clearance. We spend lots of time educating clients on the use of predicates and reference devices in a 510(k) submission. A primary predicate sets the tone for indications for use. The closer the indications for use statement is to the primary predicate, the more likely it is to be accepted by FDA. Other predicates (sometimes called reference devices) can be used for a technological comparison, including material choices and mechanical performance.

In orthopedics, the mechanical performance section of a 510(k) submission is critical. Medtech newcomers are often surprised at the difficulty of pulling data from competitive devices to compare strength. I am frequently asked by new clients to pull competitive 510(k) data to make comparisons easy but that information is not typically accessible. While the Freedom of Information Act can be leveraged to access 510(k) data, this process can be time consuming and unreliable in securing the necessary information. Here’s why: Before FDA sends the submission to the requestor, it is sent to the sponsor for redaction, and the sponsor will typically protect all sensitive and interesting material. Occasionally, useful data can be found in a redacted document, but this usually is not the norm.

The best way to get comparative testing data for correlation purposes is side-by-side testing. Such a suggestion usually strikes fear in the hearts of even the bravest entrepreneurs, as this type of analysis essentially doubles the cost of testing by testing. Cost, however, is not as burdensome as the actual acquisition of competitive parts. Companies cannot realistically call their competitors and place orders. Acquiring competitive devices involves finances and ethics; even upon acquisition, there will unlikely be enough components to perform a full battery of comparative testing. In these instances, testing matrix reviews and strategic comparative testing is critical. Case in point: For some devices that typically undergo both static and dynamic testing, there may only be enough competitive devices to characterize static strength. When performing side-by-side testing it is crucial to analyze the worst-case configuration to determine whether the competitive device is likely to be an equivalent or inferior performer. Take orthopedic screws, for example. An implant with 6 mm-diameter screws must be “worst-case” tested against a competitive and legally marketed device with 5 mm-diameter screws. Proving superiority against the smaller-sized screw will give the FDA confidence the larger diameter screw is sufficient. One area in which side-by-side testing is practically unavoidable is wear testing. The very nature of wear testing is variable depending on the machine used, set-up, testing fluid, and other factors. Although it is possible to compare wear debris generation to literature values, there must be a strong scientific justification that the testing setup (and, therefore, results) are comparable.

A much easier way to show substantial equivalence in performance testing is through simple reviews of public information. Peer-reviewed journal articles are an obvious choice, but white papers can be a valuable source as well. White papers published by large companies usually include product testing data (screw or plate strength, for example), though precise testing methodology is typically not revealed. Nevertheless, the testing data embedded in these white papers can easily be translated into a comparison table to show testing equivalence. For well-characterized products like pedicle screw systems, interbody fusion devices, and hip stems, a large body of very useful published data exists. The FDA provides a wealth of publicly available data too, through guidance documents. These files typically outline the type of data the agency expects and can also set acceptance criteria.

Some groups at FDA are more proactive at this than others: The Orthopedic Rapid Comparative Analysis (ORCA) group reviews data from various spinal devices with market approval. This group has published a peer-reviewed journal article in the Journal of Biomechanics that summarizes Static axial compression, shear and torsion, dynamic axial compression, and subsidence. ORCA also is currently evaluating thoracolumbar pedicle screw data. I’m hopeful other FDA groups will consider publishing data on well-characterized devices in the future.

Published articles also can help determine physiological loading requirements—another tool for justifying substantial equivalence. In the case of a custom designed mechanical testing plan for a novel interbody fusion device, for example, substantial equivalence could be proven via comparison to surrounding bone. Logically, if the vertebral body breaks at a certain loading level, the interbody fusion device must be equivalently as strong as surrounding bone (with perhaps an increase to account for safety). Every device is different, but I have been very successful justifying an acceptance criterion based on the argument that surrounding bone would break before the subject device. Physiological parameters are also a very reasonable basis for the number of cycles needed in dynamic testing. The FDA’s guidance document on spinal devices indicates the 5 million cycles used by most companies for dynamic testing is not random, but rather based on expected loading cycles with a built-in safety factor before fusion.

Design Changes After Clearance

The challenges associated with justifying device acceptance criteria do not necessarily end with proof of substantial equivalence. Once a product is on the market, a company will solicit feedback on the device through many avenues. This data may lead to changes and/or improvements to the device. Modifications that change the risk profile of a product require a new 510(k) submission, even if the change is necessary to mitigate a known risk. The FDA’s new guidance, “Deciding When to Submit a 510(k) for a Change to an Existing Device,” clarifies the agency’s position on submitting new 510(k) applications for product adjustments—even those considered to be safety improvements. During my tenure in this industry, I’ve seen countless product design changes (addressing specific problems) have unintended negative consequences that only surfaced during clinical use. The guidance document also addresses the consequences of not filing a new 510(k) application, noting the choice should be confirmed by successful validation and verification activities. If, for example, a change is made to a pedicle screw tulip head, testing must show the new design is equivalent to the original; parity to initial acceptance criteria is insufficient, as the product must perform the same as the original cleared device. In the past, medtech firms tended to use a letter rather than a formal 510(k) application to fix a deficiency found through postmarket surveillance data, and that approach generally was accepted because the improved device was better than its older version. However, this is no longer an acceptable practice and is in direct violation of FDA’s expectation.

The FDA also clarifies its position on labeling changes in its new guidance document. The decision-making flow chart makes it very clear that changes in indications, warnings, precautions, or changes in directions for use require a new 510(k) submission. Directions for use should be fine-tuned for purposes of readability or clarity as long as those changes do not alter the indications for use, though they should nevertheless be justified and documented. Changed indications for use require a new 510(k) submission.

Conclusions

Setting defendable acceptance criteria for both new and modified devices is a critical job in a medical device company. The difference between adequate and poor product performance is either a failed 510(k) submission for new technology or a recall for a cleared but modified device. There are many things a company can do to keep abreast of mechanical performance requirements. One of the best ways to stay well-informed of the latest mechanical testing requirements in orthopedics is to become an active member of ASTM or ISO. Most testing for orthopedic devices is driven by an ASTM or an ISO standard. The directors of these groups are very knowledgeable on testing requirements, publicly available testing data, and the newest trends in regulatory requirements. Additionally, FDA typically has representatives on these committees and is very receptive to generic questions. These standard committees are volunteer groups, but the time commitment is well worth the reward of regulatory insight into the latest testing trends.

One final piece of advice: Companies with strong mechanical justifications for performance testing that are undecided about filing a new 510(k) for a design modification should probably submit one. The decision matrices in the FDA guidance document are clear; any uncertainty over filing is indicative of a difficulty justifying the decision against a 510(k). The difference between a letter to file and a 510(k) clearance on a commercially distributed device is that a clearance letter now aligns with FDA expectations. The old way—a letter to file approach—could result in a recall.

Linda Braddon, Ph.D., is president and CEO of Secure BioMed Evaluations. She works with emerging and established companies to prove regulatory, quality, and technical support to both the medical device and biologics industries. Dr. Braddon has a B.S. degree in engineering from Mercer University along with a master of science degree and a Ph.D. in mechanical engineering with a specialization in bioengineering. Her 20 years of experience includes extensive work in orthopedics, dental implants, ophthalmology, respiratory, urology, hydrogels, dura mater substitutes, wound coverings, orthotic devices, and antimicrobial agents.